Migrating 200 Debian VMs from Hyper-V to VMware

Back in November I was tasked with orchestrating the migration of over 200 virtual machines from our existing Hyper-V infrastructure onto our new, shiny VMware based infrastructure.

But with this came challenges; downtime needed to be minimized, and the whole process needed to go as smoothly as possible. With our Hyper-V servers rapidly running out of remaining warranty and support, it needed to be done quickly, too.

Windows VMs (mostly) went smoothly, so this post is going to focus on the guest OS that made up the majority of our fleet - Debian 9.

After migrating a few test VMs, it was clear that there were two main stages of the migration process:

- Initially migrating the VM and getting it to boot

- Configuring network interfaces and packages once running on VMware

As it’s already well documented, this blog post won’t go into detail regarding the process of shifting the VM disks from Hyper-V to VMware. For the record, we used VMware Converter, installed its server on a new VM, and installed the client application on our workstations. This worked incredibly well for us, and sped the process up a lot.

Once a VM was migrated, the operating system would refuse to boot. A few steps needed to be taken in order to get them to boot correctly, but this became muscle memory after a handful of VMs had been done.

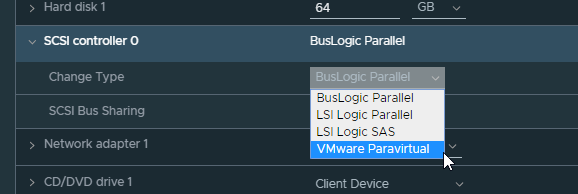

Firstly, the newly created VM would have its SCSI controller created as a “BusLogic Parallel” controller. This needed to be changed to “VMware Paravirtual” in order for the disk to be recognised at all.

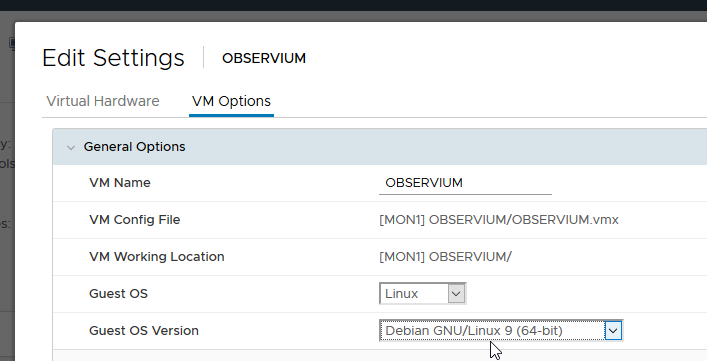

Then, the Guest OS setting also needed to be corrected, as well as forcing the VM into the EFI setup screen on next boot.

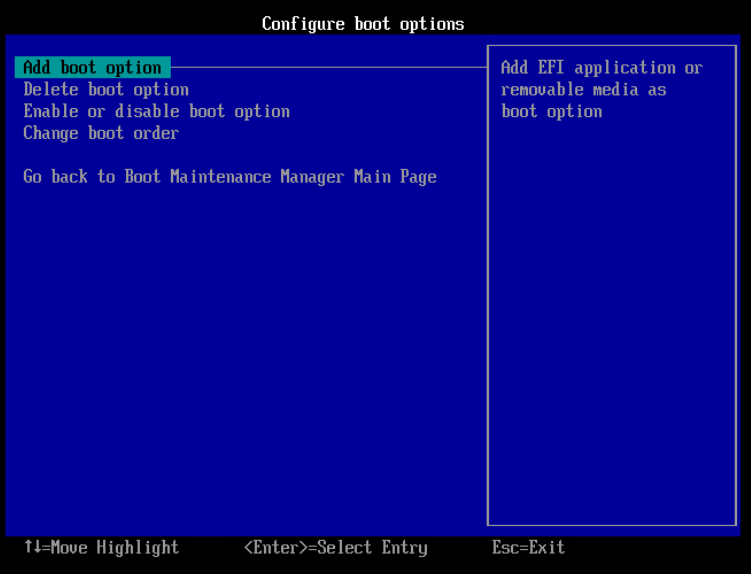

Power up the VM, and open the console. Use the arrow keys to navigate down and select the “Enter Setup“ menu, then “Configure boot options“, then “Add boot option“.

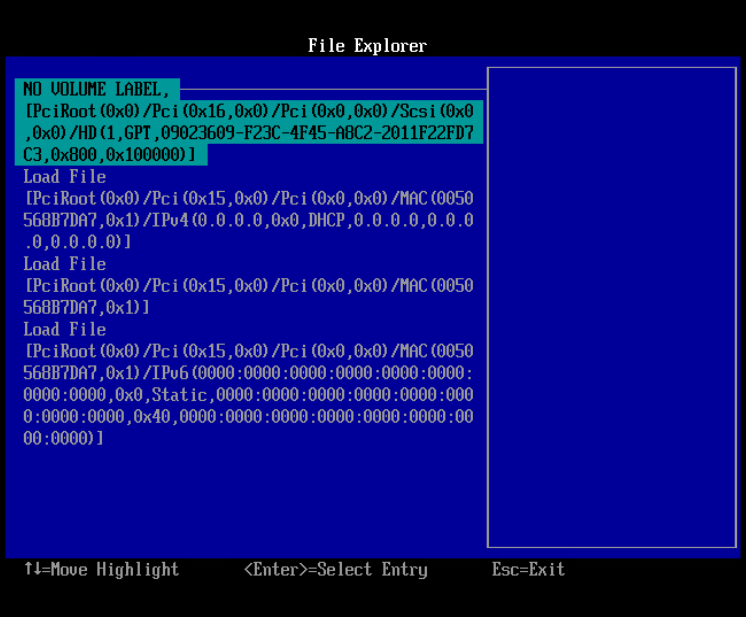

Then, you need to select the volume you’d like to add as a boot device. This is likely going to be the first option, always labelled “NO VOLUME LABEL” for every VM we migrated.

Once you’ve selected this disk, navigate through the file structure until you locate grubx64.efi, and select it. Scroll down to the “Input the description“ option and enter a sensible label. We used “Debian 9” for all of ours. Then select “Commit changes and exit”.

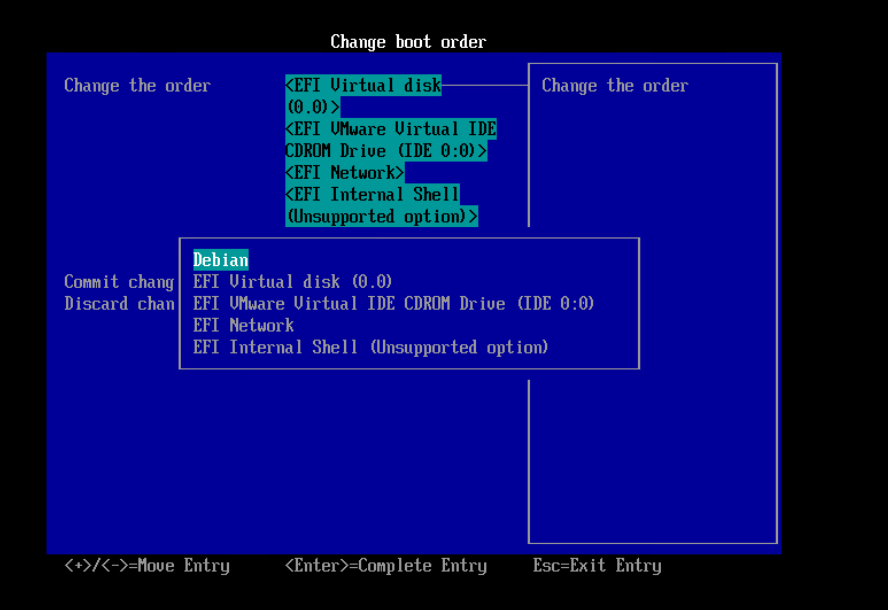

Navigate back a few menus, and select “Change boot order“ and then “Change the order“

Use the + key to move your new entry all the way to the top, and hit enter. Then select “Commit changes and exit“, “Exit the Boot Maintenance Manager“ then “Reset the System“.

The VM should now reset and successfully boot into your Debian operating system.

Once the VM was up and running on VMware, we were 99% of the way there. All that needed doing now was installing open-vm-tools and configuring the network interfaces. We found that once virtual machines had been migrated to VMware, interfaces previously eth0 renamed themselves to ens192. Some of these VMs were quite old, and didn’t use the systemd Predictable Network Interface Names, and we have a number of scripts hardcoded to use eth0 for networking.

To speed this process up and automate as much of it as possible, we used the following script:

1 |

|

This script was packaged as a Debian package called vmwaremigrate which was pre-installed on all our VMs prior to the installation. This meant that all that needed to be done was to log in to the VM through the console, run vmwaremigrate and you were done!

Overall, I’m very happy with how smoothly this process went. My colleagues and I managed to successfully migrate over 200 virtual machines in just shy of 3 weeks, limiting ourselves to around 10 a day to minimise disruption to users.